In the post a matter of state I've introduced you to the three basic building blocks that I use to build software systems:

- Commands: Commands capture the users intent. They contain data to transition into a future desired state.

- Events: Events record decissions taken in the past, usually as a result of responding to a command.

- State: Represents the data and structure of an entity at a given point in time (usually current time).

Using these three base primitives together with Event Modeling I can analyse and model business processes.

From the resulting models it then becomes possible to derive processing patterns by looking at the transitions between commands, events and state.

In total there are 9 such transitions between commands, events and state.

Taxonomy

For each of these transitions I have a go to design pattern and a default implementation of these patterns.

These 9 patterns are:

| In \ Out | Commands | Events | State |

|---|---|---|---|

| Commands | Delegation | Aggregate Root | Downstream Activity |

| Events | Reaction | Event Processing | Projection |

| State | Task Processing | Event Generator | State Transformation |

Note: this table reads from left to right: Command in -> events out = Aggregate root

I didn't invent any of these patterns, instead I have selected them from various industry books and online literature.

- CQRS/DDD/ES: Task Processing, Aggregate Root & Projection

- EDA: Event Generator, Event Processing & Downstream Activity

- GOF: Delegation & Reaction

- SOA: State Transformation (Data Model Transformation)

In the upcoming sections I'll discuss them in this order and try to refer to their origins.

They all fit nicely together though, as the output of one fits the input of others.

CQRS/DDD/ES Patterns

Task Processing, Aggregate Root & Projection are the most common patterns for systems supporting business processes.

These patterns stem from the collective work of Greg Young, Eric Evans, Martin Fowler and have been built upon by many others in the CQRS/DDD/ES community.

These are also the three patterns that are highly recommended by the Event Modeling methodology.

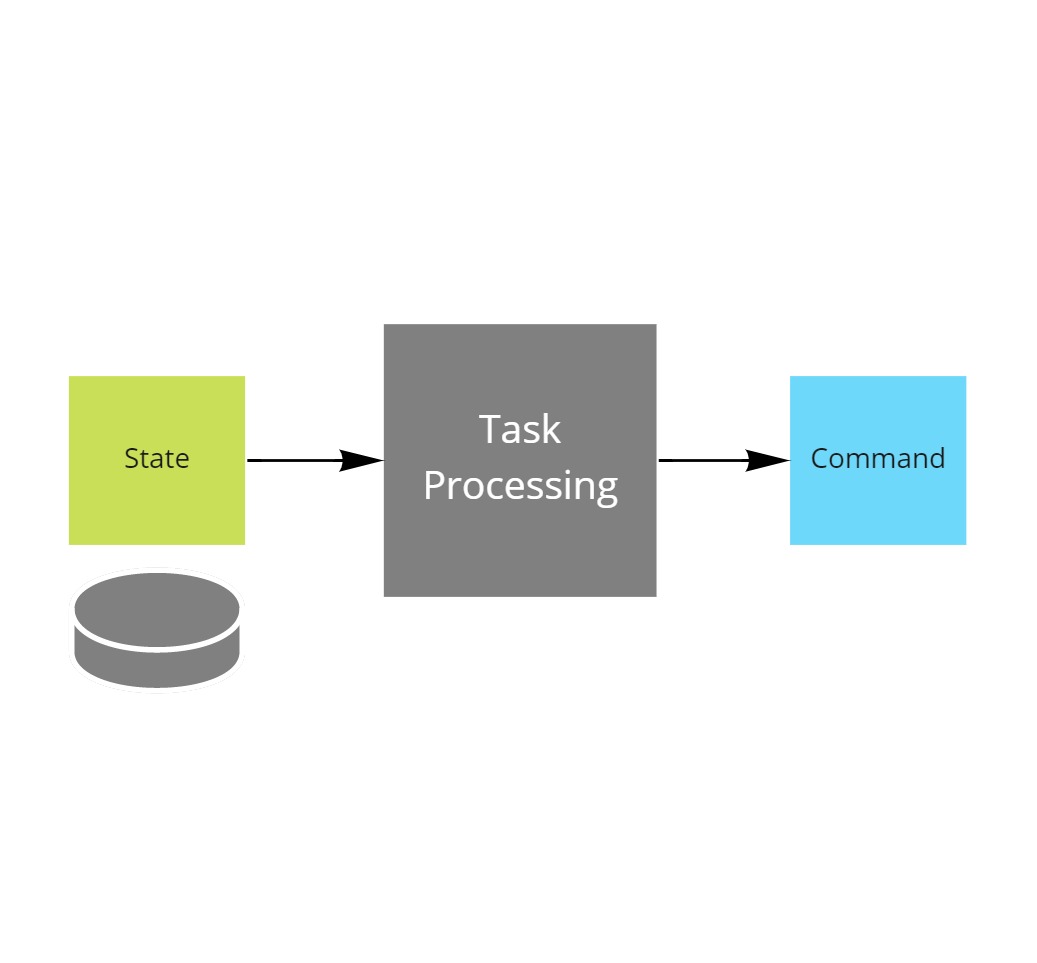

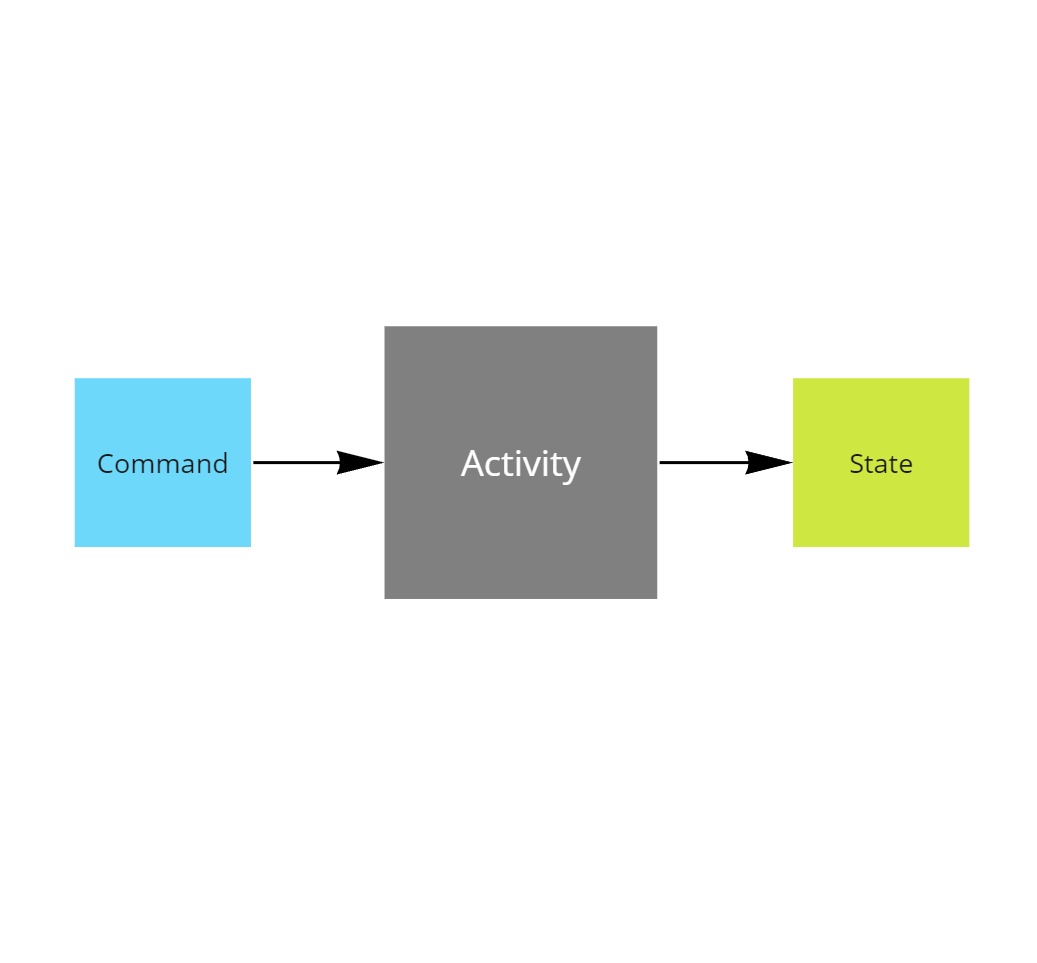

Task Processing

Task processing interprets state as 'todo lists' and decide which command should be issued to progress entities in their lifecycle.

In most systems, especially in the early stages, a lot of the task processing is performed by the users.

The system presents them state, in a user interface, e.g. a list of orders to process, and they will take a decission on what commands to issue in order to progress the state of these entities further down their lifecycle, e.g. grant a discount.

The system offers them the correct widgets to issue those commands, typically via a Task Oriented User Interface.

The Task oriented UI concept is derived from Microsoft Inductive User Interface Guidelines, and further refined by the CQRS/DDD/ES community.

When the rules to issue certain commands are crystal clear, the system can automate task processing. E.g. all orders from gold customers get a 10% discount.

From a technical perspective this pattern can be implemented using a background worker which scans the state (directly from a database, a task queue or pulled from an api), interprets it, and invokes a specific command when the rules are met.

When the command is issued the worker typically marks the task as done so that it doesn't process the task twice.

People often want feedback of success or failure of the issued commands, to achieve this you can combine the task processing pattern with Aggregate Root plus Projection or Downstream Activity.

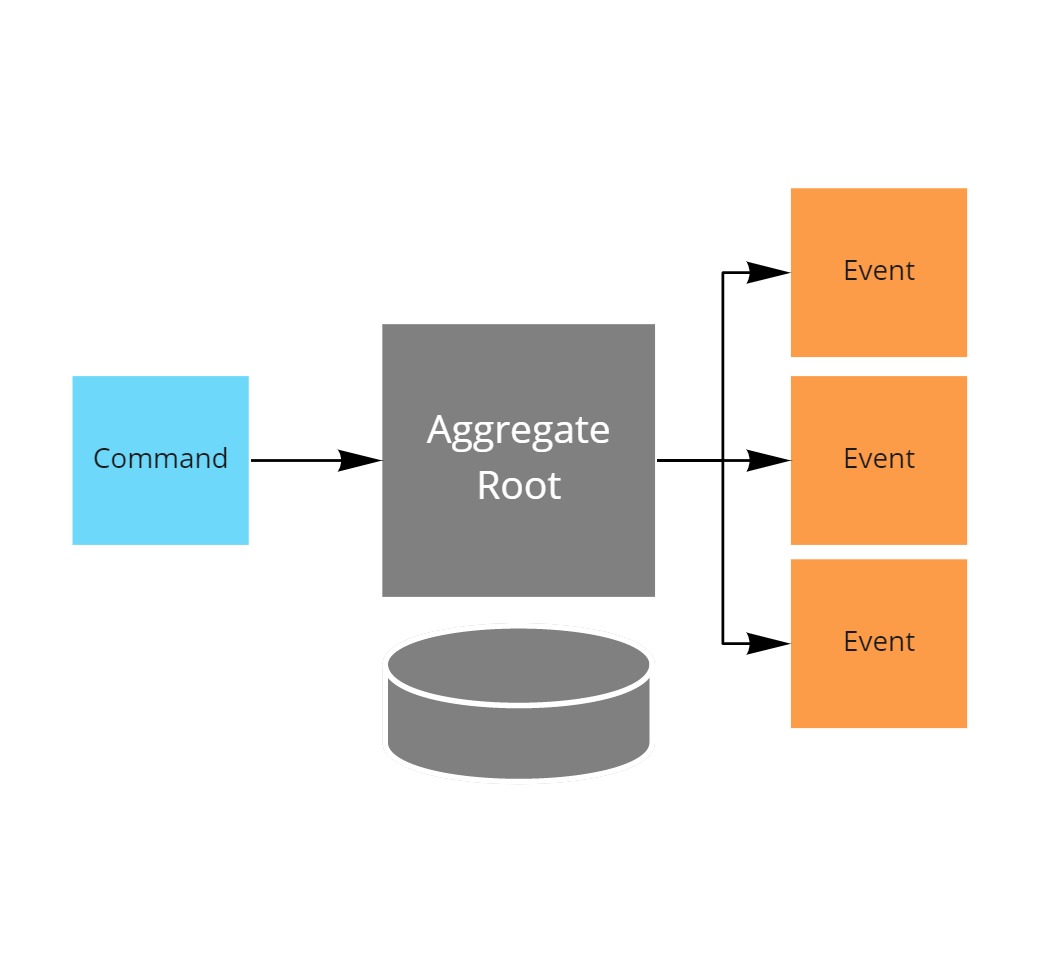

Aggregate Root

An aggregate root, as defined in the book Domain Driven Design (DDD) by Eric Evans, guarantees the consistency of changes made to entities and value objects encapsulated within the aggregate.

An aggregate root can guarantee this consistency by forbidding external objects from holding references to its members and thus prevents manipulating them directly.

Instead external objects must issue commands to the aggregate root, which will then perform the changes on it's members on behalf of the issuer while maintaining consistency and validating all invariants.

When the aggregate root is done making the changes, it will publish events to anyone else interested in the outcome.

E.g. a SalesOrder aggregate will validate that a booking contains eligible product items for the sale and raise a SalesOrderBooked event if the booking is allowed.

Very often the Aggregate Root pattern is internally implemented using Event Sourcing.

Event sourcing means that the internal state of the aggregate root is reconstructed from the events it previously emitted.

In practice all my aggregate root implementations will be event sourced, yet this isn't mandatory.

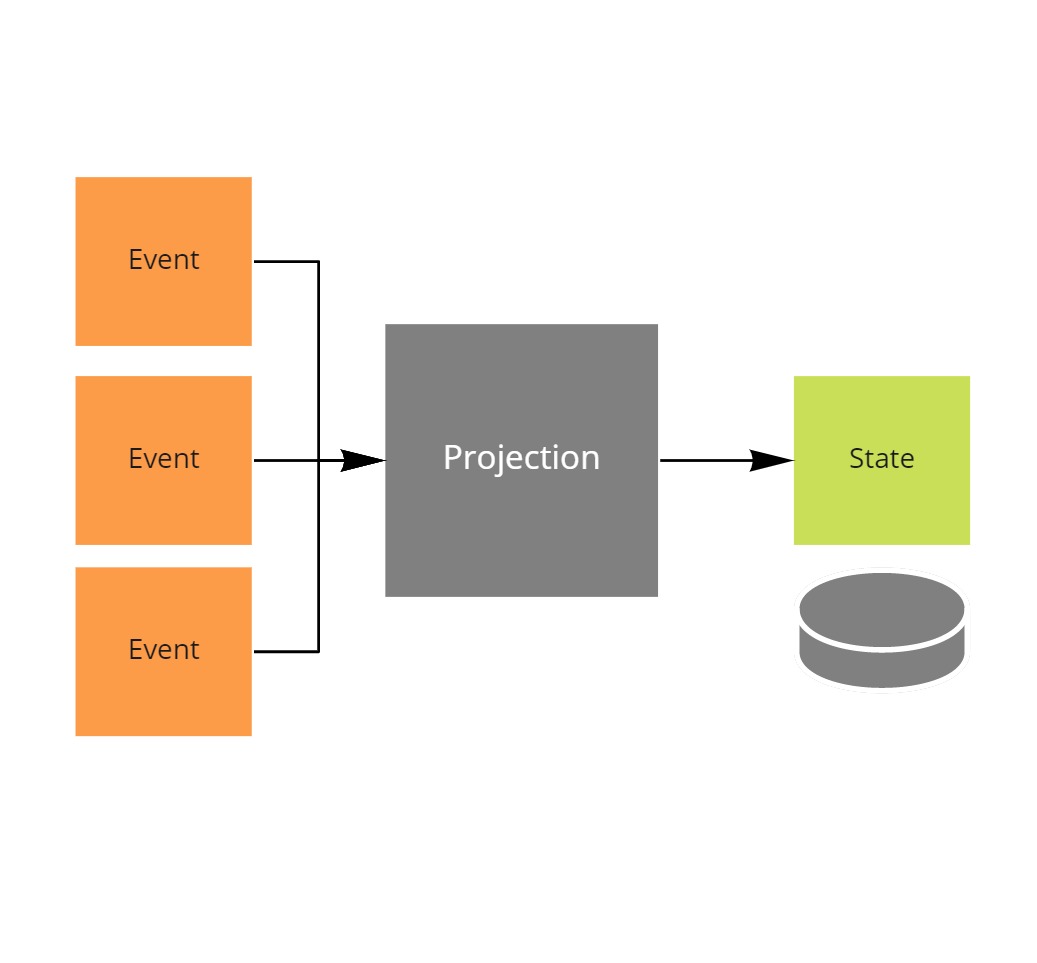

Projection

Most humans are not able to plug themselves into a stream of events and make sense of it.

Instead they prefer to be shown some sort of a summary that is adequate for the job at hand.

Turning event streams into such a summary is the responsibility of a projection.

The output of this projection can then be stored in a database and shown on a screen.

Projections are often used by the user as confirmation that the command they invoked previously has succeeded or failed. E.g. by showing the granted discount on the original list of orders the users was processing.

When a projection is used as confirmation it is recommended to have session level consistency with the processed command, otherwise people get confused and will invoke the command multiple times.

For other projections, eventual consistency models are fine.

EDA Patterns

Not all systems match nicely to a business process though, some have other goals such as monitoring and control, or have integration requirements with systems that do not follow the command > event > state paradigm.

For many of these needs the Event-driven architecture (EDA) patterns are a good fit.

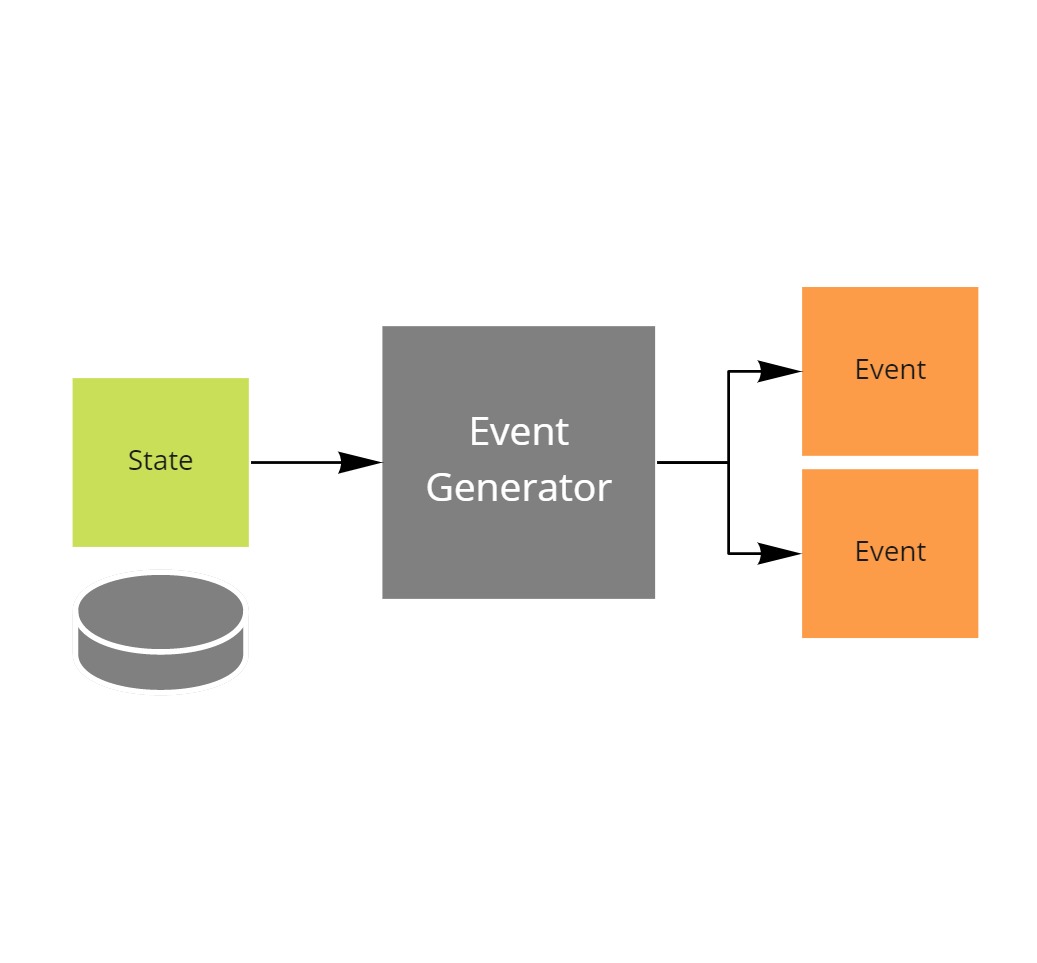

Event Generator

An event generator detects changes in state and reports those changes as events that can be understood by the rest of the system.

Some examples of event generators could be:

- A motion detection sensor connected to the network which reports peoples or objects passing by.

- A webhook invoked by a third party service when a payment completed

- A change data capture system interpreting changes in another systems database

- ...

They all have the same traits in common: they derive events from changes in state.

This pattern is very natural in an IoT environment, but it can also be used to retrofit state from an other system to an event oriented model.

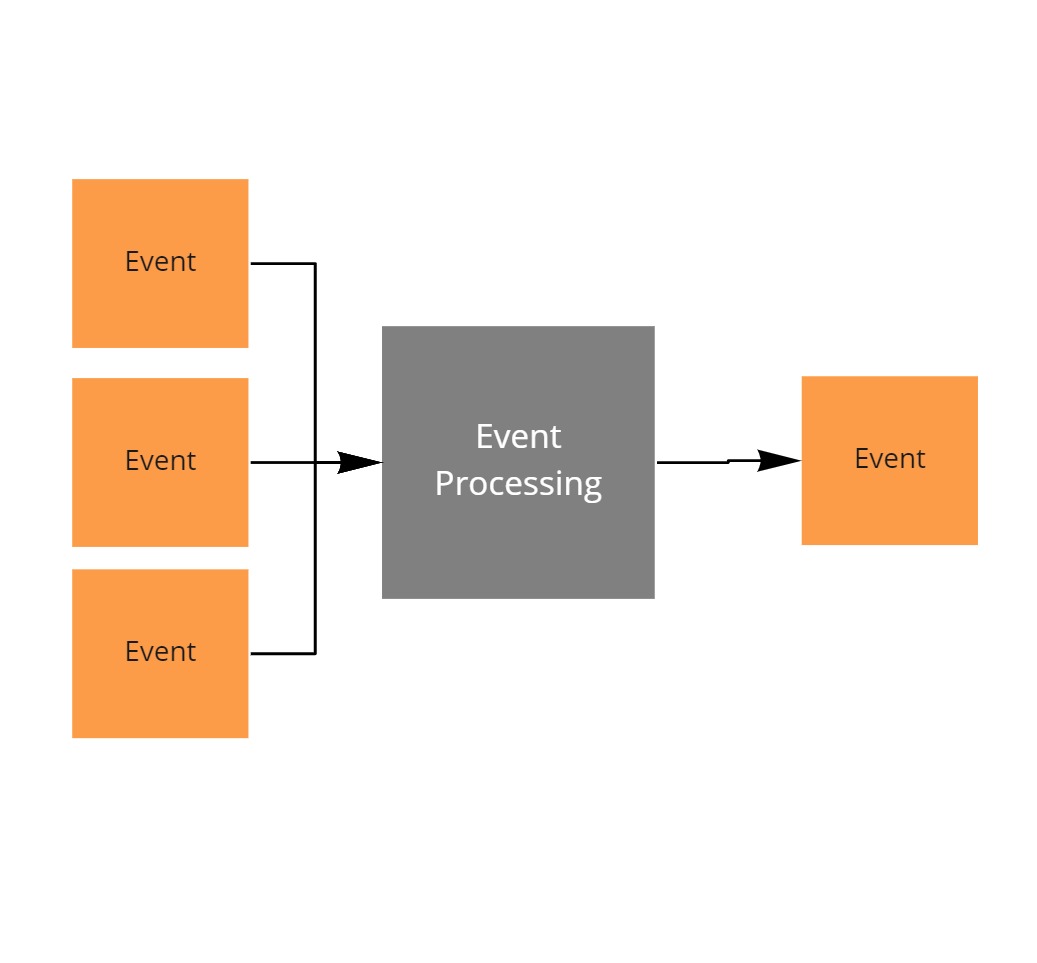

Event Processing

Event processing refers to the practice of interpreting actual events and then performing operations in response to patterns detected.

There are different event processing styles:

- Simple Event Processing: Each operation maps to a single processed event.

- Stream Event Processing: Each operation maps to a pattern detected from a stream of similar events.

- Complex Event Processing (CEP): Each operation maps to a pattern detected from a combination of different events.

- Online Event Processing (OLEP): Indicates that the events come from a historic datastore instead of being processed in real time.

Operations that can be performed may include:

- Aggregations: e.g. calculations such as sum, mean, standard deviation

- Pattern detection: e.g. windowing, anomaly detection

- Analytics: e.g, predicting a future event based on patterns in the stream, e.g. predicting an overflow event when water levels keep rising

- Transformations: e.g. changing the event format

- Enrichment: e.g. combining the data points in an event with other data sources to create more context and meaning

As a result of executing these operations, an event stream processor typically emits new events to let any interestee know of the outcome of the operation.

Downstream Activity

A downstream activity represents the downstream consequences triggered in response to an event (which usually acts as a pseudo command) or in response to a proper command.

A few examples:

- In a mechanical system, an actuator could open a valve to aply pneumatic pressure moving parts in the system.

- In an electrical system, it could be a relay that turns a motor on or off.

- In a cloud environment it could be sending an email, via a third party service, through an API call to confirm a funders order.

Downstream activities often have some impact, either direct or indirect, on the physical world.

GOF Patterns

The book Design Patterns: Elements of Reusable Object-Oriented Software, is one of the first well known design pattern descriptions out there. As it has been written by 4 authors, it's also often referred to as Gang Of For (or GOF)

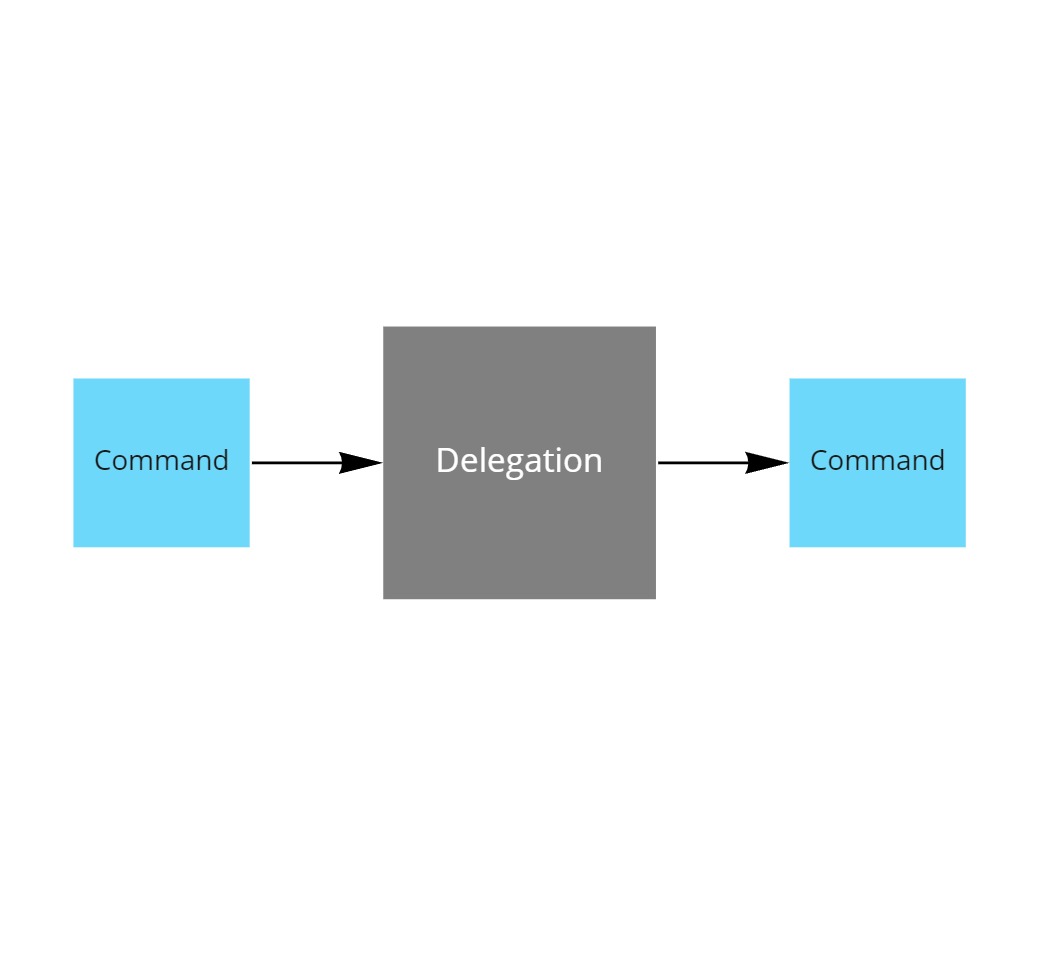

Delegation

In the GOF a delegation is defined as: Delegation is a way to make composition as powerful for reuse as inheritance [Lie86, JZ91]. In delegation, two objects are involved in handling a request: a receiving object delegates operations to its delegate.

I'm mainly using this pattern when I want to delegate the exection of a command in either another location, or by a more specialized component.

There are these seemingly simple commands, that turn out to be bloody b**tch*s and are better left to the professionals. Sending email and processing payments are prime examples of this.

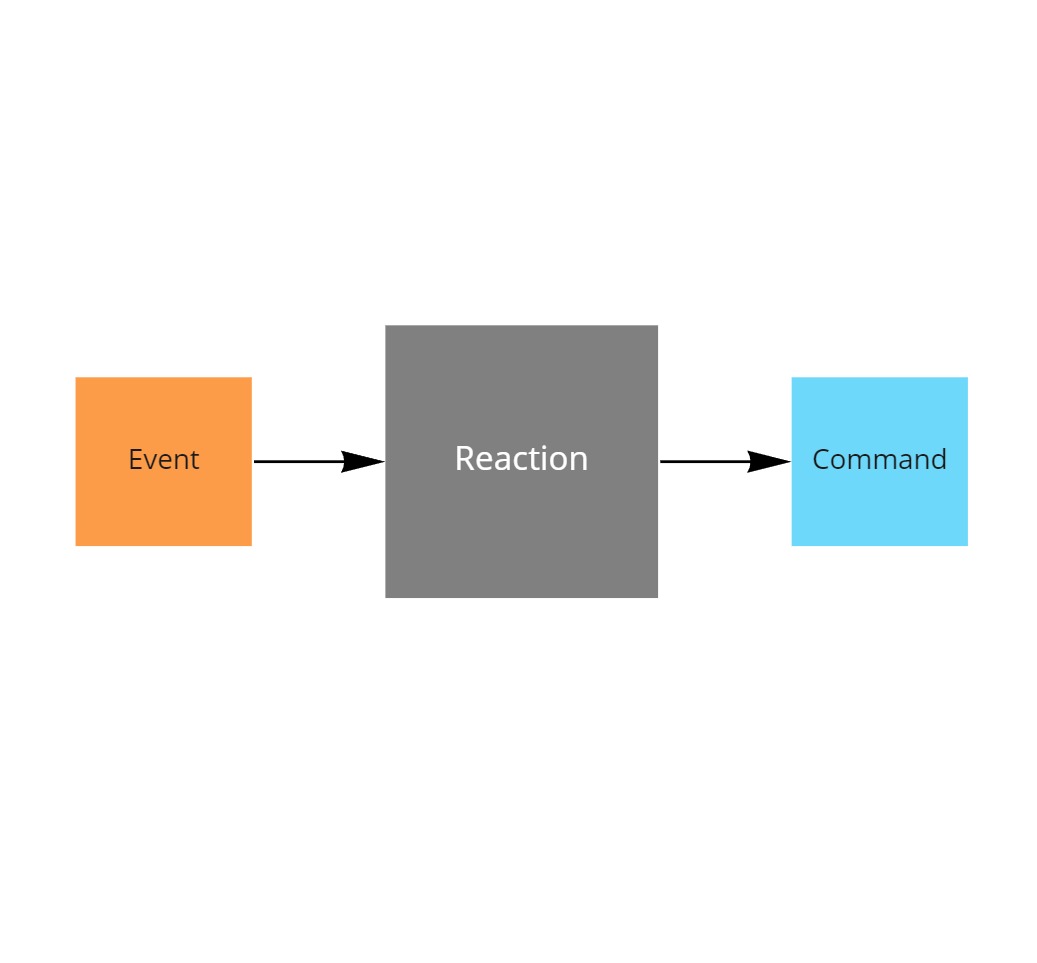

Reaction

The reaction design pattern is an event handling pattern for handling service requests delivered concurrently to a service handler by one or more inputs. The service handler then demultiplexes the incoming requests and dispatches them synchronously to the associated request handlers.

It's actually defined in the book "Pattern-Oriented Software Architecture, Volume 2, Patterns for Concurrent and Networked Objects", but given it is a derivative of the GOF's observer pattern, I decided to include it along with delegation.

I use this pattern every time something needs to happen in reaction to something else that happened before.

Typically I differentiate transient reactions from guaranteed reactions:

- Transient reactions are best effort and require less delivery guarantees (e.g. notifying someone through SignalR)

- While guaranteed reactions do require delivery guarantees (e.g. legal obligation to notify someone of a successful payment)

SOA Patterns

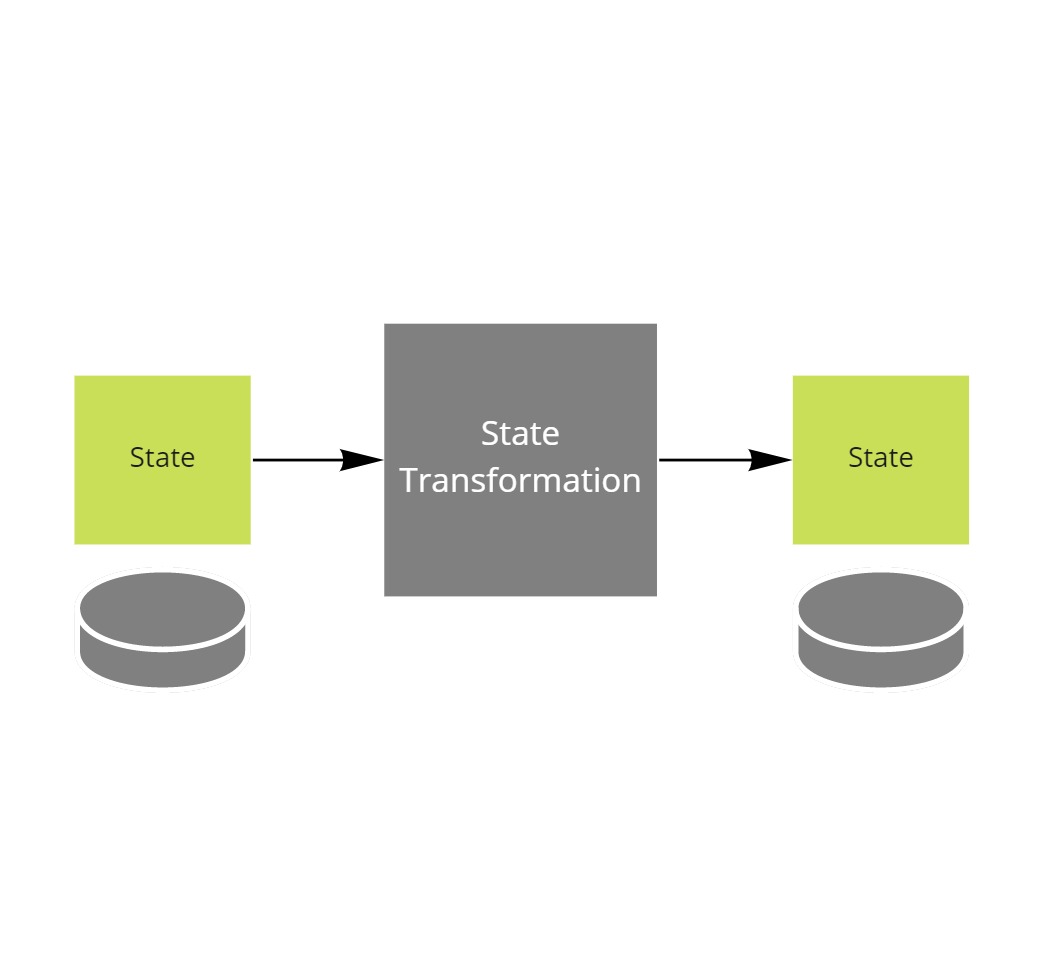

And finally we end up at the last processing pattern, a transformation from state to state.

In the services world this pattern has been documented by Thomas Erl in his SOA patterns book series.

Erl calls it Data Model Transformation.

State Transformation

This pattern probably exists since the first computers have been around though and often translates in:

- ETL scripts

- Batch jobs

- Import/Export features

As I already dedicated an entire article on the downsides of this pattern, in a matter of state, it's needless to say I'm not a big fan of it.

Note on distribution

By combining the above 9 processing patterns, you can build any kind of system. From business process support and automations to IoT systems, from online API's to offline web apps.

None of these patterns mandate distribution though, you can combine them inside a browser based offline web app without any distribution at all. But they all support distribution, so that when said web app needs to integrate with other parts of the system, it can, using the same patterns combined in a different way.

Bonus: EIP Patterns

When distribution inside the system, or execution in other systems, is required in response to a single command or event, then the Enterprise Integration Patterns (EIP) offer multiple options.

The author of these patterns, Gregor Hophe, combined several of them together into what he calls Conversational Patterns.

Of these Conversational patterns the Choreography and the Orchestration pattern are most interesting to transition from respectively event or command, to other commands.

In practice these patterns offer two very different ways to fully automate a business process or to set up integration between disparate systems.

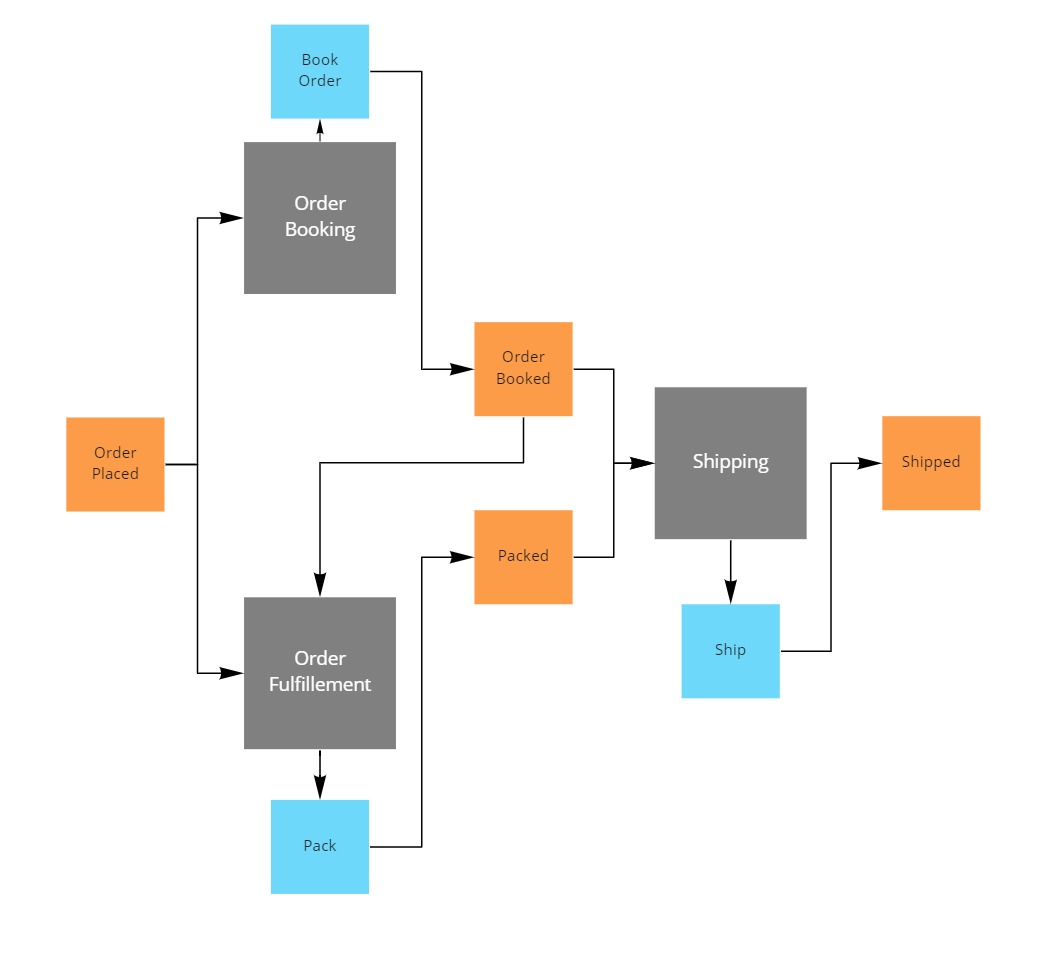

Choreography

In a choreography, each partner in the conversation tracks the communication with its partners itself.

It does so by listening to events, emitted by those partners, and respond to them by taking action locally (interpreting events as if they were commands) and in turn emitting events as well.

Note that events are facts, they have already happened, they are not as indeterministic as commands are.

So in a choreography there is no failure path to design, all failures can be handled locally by each of the partners.

Therefore I prefer this pattern over orchestrations, which do suffer from the indeterministic oucome of commands.

The most notable downside of this pattern is that it is invisible and requires stellar documentation.

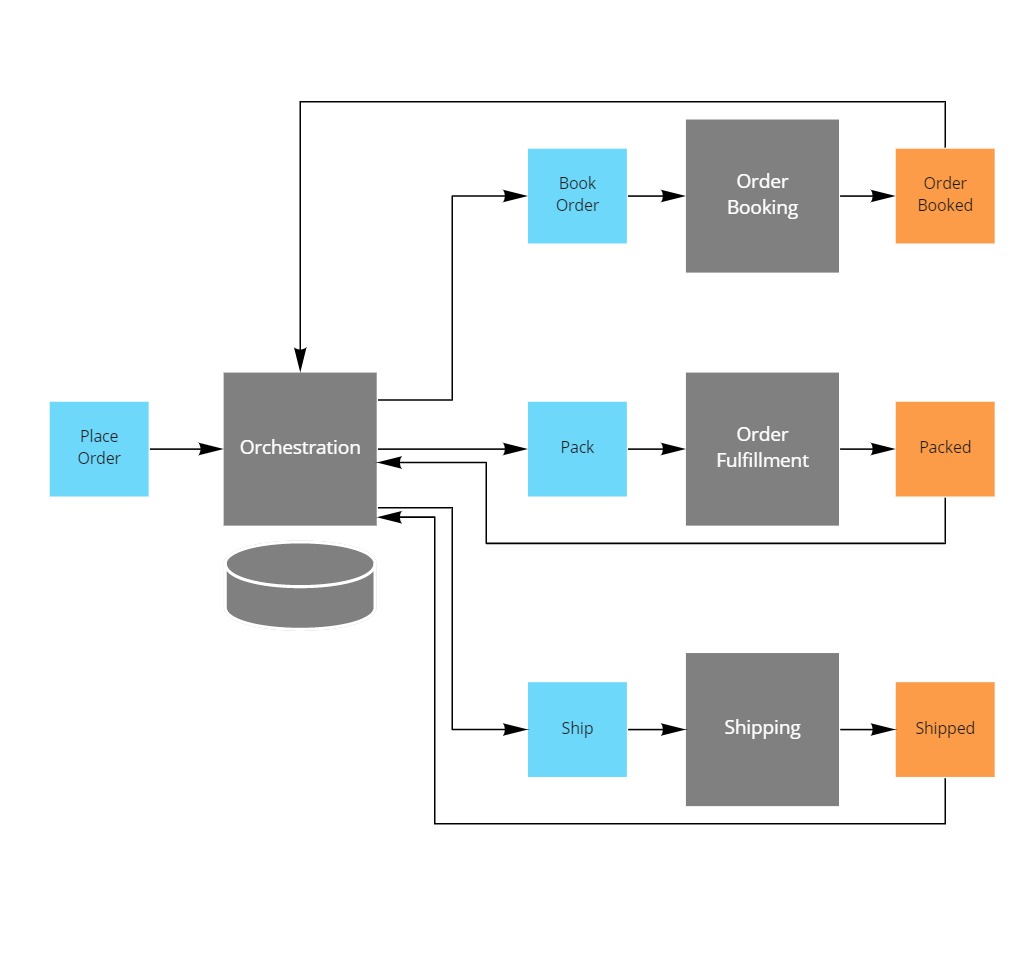

Orchestration

In an orchestration there is a central component that represents the process itself.

This component responds to a command by issuing more commands to other components in the system, while it keeps track of the state of the conversation between these partner components.

The orchestration needs to listen to responses, or events, emitted by the partner components in order to keep track of the state of the conversation.

When a partner component reports that it successfully executed a command, the orchestration will issue follow up commands.

When the partner component reports a failure, the orchestration needs to send subsequent commands to it's partners to correct, or compensate for, the side effects of any prior executed command.

Which in turn can fail as well, resulting in more complex compensation logic.

And what about saga's?

Depending on who you talk to, the word Saga might mean an orchestration or a choreography.

This word has caused quite a stir in the community in the past, so I try to avoid it ever since.

Final notes

I have roughly ordered the patterns in order of personal preference, but each of them has its time and place in the design most systems.

If you are interested in seeing implementation details of how these patterns can be implemented using MessageHandler, check out the quickstarts below.